We talk a lot about AI right now.

We talk about copilots, agents, RAG, vector databases, knowledge graphs, data platforms, and automation everywhere. We talk about models getting smarter, inference getting cheaper, and interfaces getting more natural.

But I think one of the most important topics is still not discussed enough: context.

Not just data.

Not just documentation.

Not just prompts.

Context.

And more importantly, how we engineer context for the next generation of enterprise applications.

Because in the end, an AI system without context is just a very convincing probabilistic machine. It can sound smart. It can generate something plausible. It can even appear useful for a while.

But without the right context, it does not understand your company, your processes, your constraints, your history, your trade-offs, or your operating reality.

That is where, in my opinion, the real work begins.

That is where context engineering starts to matter.

Every team already has context

In any company, every team has context.

Every platform has context. Every business unit has context. Every domain, every product, every support team, every architecture group, every operational flow has context that is specific to them.

That context lives in many places:

- documentation

- tickets

- runbooks

- diagrams

- architecture decisions

- integration flows

- business rules

- production incidents

- tribal knowledge

- naming conventions

- process exceptions

- historical decisions nobody wrote down properly, but everybody somehow remembers

So the problem is not that companies have no context.

The problem is that this context is fragmented, uneven, local, and often trapped.

For years, enterprises have tried to solve this by centralizing data. Push everything into data lakes. Consolidate into reporting platforms. Standardize governance. Build a golden source. Try to create one place where everything is supposed to become coherent.

That effort has value, of course.

But I do not think it solves the full problem.

Because context in a real enterprise is not naturally centralized. It is distributed, decentralized, and constantly evolving.

And honestly, it will stay that way.

So maybe the goal should not be to centralize everything into a single giant system of truth.

Maybe the goal should be to federate distributed knowledge without destroying the richness of local context.

That is a very different design problem.

The future is not hyper-centralization, it is federation

I increasingly believe that the next wave of enterprise architecture will not be built around one platform trying to own everything.

The useful knowledge is created everywhere.

It is created by engineering teams, product teams, support teams, architects, process owners, operations, finance, logistics, integration specialists, and sometimes by the few people who have simply been around long enough to remember why something was built a certain way.

Trying to flatten all of that too early into a rigid central model usually creates two bad outcomes.

First, you lose nuance.

Second, people stop contributing, because the system becomes too heavy, too abstract, or too far from how they actually work.

So the challenge is not to erase local knowledge.

The challenge is to make local knowledge composable.

That means each team should be able to build and maintain its own context, in its own domain, while still contributing to a larger enterprise-wide knowledge fabric.

That is what I mean by context engineering.

If you want to break silos, start with domains, bounded contexts, and end-to-end flows

One of the biggest mistakes we still make in IT is that we model the enterprise too much through applications.

We think app by app. Team by team. System by system.

But that is not how value flows through the business.

The most meaningful context in an enterprise is rarely application-centric. It is usually tied to domains, bounded contexts, business processes, value chains, and the flows that cut across them.

This is where ideas from domain-driven design become especially relevant.

Because if we want context to be useful, reusable, and eventually consumable by AI systems, then we need much better structure around the business itself. We need to understand where a domain starts, where it ends, what language it uses, what business objects it owns, and how it interacts with other domains.

That is exactly the value of bounded contexts.

They help us define coherent areas of meaning inside the enterprise. They help us avoid mixing concepts that should stay distinct. And they give us a much stronger foundation for building knowledge systems that reflect reality instead of flattening it.

But bounded contexts alone are not enough.

Because value does not stop at the boundary of a single domain.

It moves through end-to-end business processes and across value chains.

A customer order.

A supply chain exception.

A stock reconciliation.

A financial posting.

A warehouse event.

An intercontinental logistics flow.

A customer promise that needs to be fulfilled across multiple systems.

These are not just technical flows. They are business flows moving across domains, platforms, interfaces, and operational teams.

That is why context engineering has to care deeply about what I would call architecture for flows.

Not just application architecture.

Not just data architecture.

Not just integration architecture.

Architecture for flows.

How does information move across the enterprise?

How does a business object evolve from one step to another?

Where are the handoffs?

Where are the breaks in meaning?

Where are the frictions, the delays, the rework loops, the hidden dependencies?

If we can document and structure those flows properly, in a way that is transverse, explicit, and consistent, then we create something extremely valuable.

We create a shared understanding of how the enterprise actually operates.

And that is where I think the opportunity is huge.

Because once those business processes, bounded contexts, and cross-domain flows are described in a structured and uniform way, they become much easier to:

- understand

- govern

- evolve

- connect to applications and interfaces

- expose to support and operations

- and eventually serve to AI systems

That is the real goal.

To make the flow across the enterprise as visible, as smooth, and as intelligible as possible.

Not only in runtime, but also in the way we document, govern, and improve it.

Because the better we structure these flows, the easier it becomes to build systems, teams, and agents that can operate on top of them intelligently.

Knowledge should be treated like a platform asset

I think one of the biggest mindset shifts we need is this:

knowledge is not a side artifact, it is a platform asset.

It should not be treated as an afterthought.

It should not be treated as a project deliverable that gets stale the day after go-live.

It should not live in closed systems where it is hard to update, hard to version, hard to search, and impossible to reuse properly.

If knowledge matters, then it needs an operating model.

Personally, I strongly believe in a model where knowledge is:

- written in Markdown

- stored in an open, versioned, automatable platform

- reviewed through pull requests or merge requests

- traceable

- auditable

- versioned over time

- automatically processable through pipelines

I am using GitLab here as a concrete example, because it naturally offers many of the capabilities that make this approach practical. But the point is not GitLab alone. It could absolutely be another platform with the same kind of characteristics.

What matters is not the brand of the tool. What matters is the model behind it.

You need a platform that gives you, at minimum:

- version control

- collaborative review workflows

- history and traceability

- ownership and governance

- automation hooks

- API access

- pipeline integration

- strong support for open formats like Markdown

GitLab happens to be a very relevant example for this kind of use case, but it is not the only possible answer. The broader point is that enterprises need platforms that can treat knowledge as something living, governed, reusable, and machine-consumable.

We already know how to operate code this way.

Now we need to apply the same discipline to knowledge.

Not just documentation as code.

Knowledge as code.

That changes everything.

A collective playground for business, build, and operations

What makes this model especially powerful is that this type of platform can become much more than a repository.

It can become a collective playground.

A shared environment where different parts of the organization contribute to the same living body of knowledge, each from their own perspective, each adding a piece of the truth.

You can imagine business teams capturing functional expertise:

- what the process is really trying to achieve

- what the critical business rules are

- where the exceptions matter

- what the real pain points are

- what outcomes the business actually cares about

Then project and build teams can enrich that same body of knowledge with implementation reality:

- interfaces

- workflows

- dependencies

- integration patterns

- technical constraints

- architectural choices

- known trade-offs

And then operations and support teams can keep grounding that knowledge in frontline reality:

- how the system behaves in production

- what the happy paths look like

- what the unhappy paths look like

- what actually breaks

- how incidents are diagnosed

- what workarounds exist

- what business continuity mechanisms are in place

- where the real operational fragility sits

This is where it becomes very interesting.

Because instead of having fragmented documents for business, separate specs for delivery, and disconnected runbooks for support, you start building a shared contextual layer that reflects how the service really works end to end.

That is far more valuable than static documentation.

That becomes operational knowledge.

From frontline reality back into the context system

What I find especially compelling is the feedback loop this creates.

The document is no longer something written once and forgotten.

It becomes something that evolves based on what happens on the frontline.

A production incident teaches you something, update the context.

A support team discovers a recurrent failure mode, update the context.

A business team clarifies a rule or an exception, update the context.

An architecture decision changes an interface or a dependency, update the context.

That means the knowledge base is no longer disconnected from reality.

It becomes the place where the organization continuously captures what it is learning.

And that matters a lot, because continuity does not come from writing documentation once. It comes from building a system where documentation keeps getting fed by delivery, operations, and business usage.

Done well, this kind of living documentation could become the main reference artifact.

Honestly, I think that is where we should head.

Not dozens of disconnected documents, each with partial truth, each aging at a different speed.

But a smaller number of living, governed, versioned knowledge artifacts that serve as the actual reference layer for how the service works.

From knowledge repositories to context pipelines

Once knowledge is structured in open, versioned, machine-readable formats, something really interesting happens.

It stops being passive.

It becomes something you can process.

You can build context pipelines.

Instead of storing documents and hoping people find them, you can build automated flows that:

- collect curated Markdown knowledge

- enrich it with metadata

- map it to business domains, bounded contexts, processes, and systems

- split it into meaningful chunks

- generate embeddings

- create semantic search indexes

- expose that context to applications, copilots, or agents

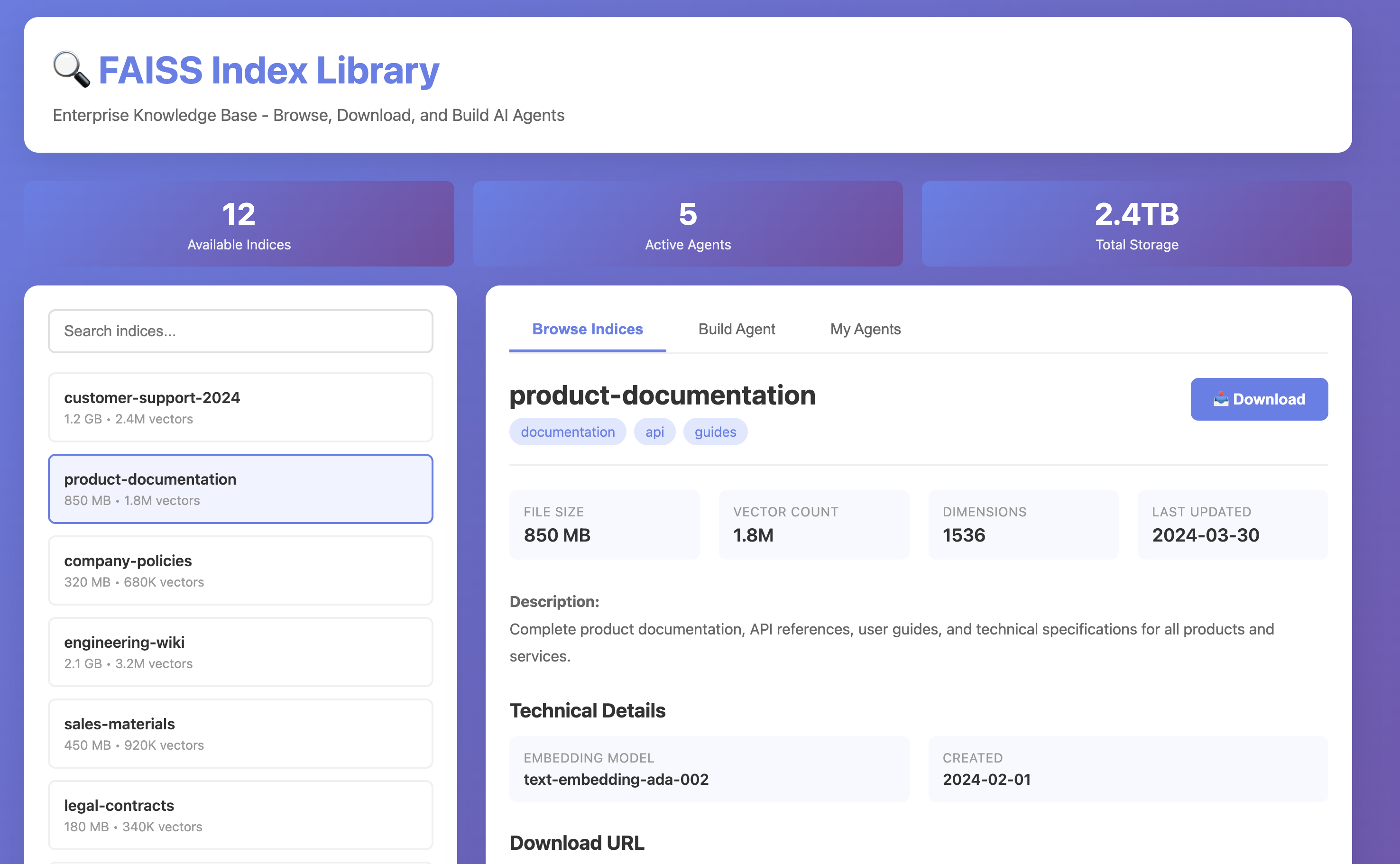

This is where technologies like FAISS become really exciting.

FAISS makes similarity search over vectors practical at scale. In simple terms, it gives you a way to search for meaning, not just for keywords.

And that matters a lot in enterprise environments.

Because real enterprise knowledge is messy. Different teams use different words. The same concept appears in different forms. Processes evolve. Terminology drifts. New systems inherit old names. Some of the most important information is not expressed in a perfectly normalized way.

Traditional keyword search is often not enough.

You need systems that can retrieve semantically relevant knowledge from a distributed body of context.

And once you can do that reliably, you stop building isolated AI demos and start building actual enterprise capabilities.

The real AI opportunity is not better prompting, it is better context systems

A lot of AI work in the enterprise still feels too prompt-centric.

People focus on the model.

They focus on the interface.

They focus on the agent behavior.

They focus on the orchestration logic.

All of that matters, but I think the bigger differentiator will be elsewhere.

The real advantage will come from companies that know how to build, govern, and serve context well.

Because an agent is only as useful as the context it can access.

If it does not know:

- which systems matter

- which business objects are canonical

- which rules are binding

- which exceptions are tolerated

- which flows are critical

- which systems are sources of truth

- which historical decisions shaped the current state

then it will produce output that looks polished but lacks operational depth.

That is not intelligence.

That is surface area.

The companies that win will be the ones that transform their internal knowledge into something structured, accessible, governable, and reusable across AI use cases.

A portal of context, not just a pile of documents

One idea I find compelling is to think about context as a catalog of reusable enterprise knowledge assets.

Imagine an internal portal, or library, where teams publish governed context packages by domain.

For example:

- finance

- supply chain

- customer orders

- intercontinental flows

- warehouse operations

- enterprise architecture

- incident knowledge

- support runbooks

- product capabilities

- integration patterns

Each package could expose curated knowledge artifacts, metadata, embeddings, semantic indexes, and ownership information.

Then, instead of building every AI use case from scratch, you could compose agents from trusted context domains.

You want to build an agent for finance plus intercontinental supply chain processes?

Fine. Use those two context packages.

You want a support copilot that understands architecture decisions, integration dependencies, and historical incidents?

Fine. Pull those context layers together.

That creates a completely different foundation for AI.

The agent is no longer a disconnected experiment.

It becomes a consumer of enterprise context products.

That is a much stronger model.

The future SDLC will be far more contextual, and far more automated

I do not believe software engineering is going to disappear.

But I do think it is going to change significantly.

I think a growing part of the work will move upstream, closer to the business, closer to process understanding, closer to decision-making, and closer to system intent.

In practice, that means engineers will likely spend more time with business stakeholders asking better questions:

- what are the actual inflection points you want to create?

- what business cases matter most?

- what trade-offs are acceptable?

- what should change in the process, and what should not?

- where do we need flexibility, and where do we need control?

That conversation becomes even more powerful once you have agents operating on top of a strong context system.

You can imagine agents that:

- capture business requirements

- challenge those requirements against the current as-is architecture

- compare them with existing process knowledge

- identify dependencies and conflicts

- propose different implementation options

- surface trade-offs between option A, option B, and option C

- generate specifications for delivery teams

- prepare implementation artifacts for engineering workflows

This is where things get very real.

The future SDLC could become much more automated than what we know today.

Not because humans disappear.

But because humans move to higher-value activities, while more of the translation layer becomes machine-assisted.

Business intent is captured.

Context is retrieved.

Architecture is checked.

Options are proposed.

Specifications are generated.

Delivery workflows are prepared.

Engineering teams review, refine, and execute.

That is not science fiction anymore.

That starts to look like a plausible operating model.

Accessibility and interoperability are now non-negotiable

If this vision makes sense, then a few consequences follow immediately.

First, knowledge has to be accessible.

Not necessarily open to everyone, of course. Access control still matters. But knowledge must be technically accessible through usable formats, APIs, metadata, and reliable ingestion mechanisms.

Second, knowledge has to be interoperable.

That means:

- open formats

- consistent structures

- portable content

- clear identifiers

- links between business concepts and technical implementations

- standards for how context is described and published

Third, knowledge has to be governable over time.

You need to know:

- where it came from

- who owns it

- who changed it

- why it changed

- what was valid at a given moment

- what other artifacts depend on it

In other words, context must be trustworthy.

Without trust, you cannot scale AI on top of it.

ITSM tools should be destinations, not the center of gravity

This also changes how I think about enterprise tools, especially ITSM platforms.

ITSM tools still matter. They can remain valuable operating systems for incidents, problems, changes, requests, and knowledge distribution.

But I do not think they should automatically be treated as the central system of knowledge.

If they have good APIs, good exportability, good integration patterns, and support open content models, great.

If they can consume and publish Markdown-based knowledge, even better.

But if they are closed, hard to integrate, weak on interoperability, and poor at participating in modern context pipelines, then we need to be honest about their role.

They should be treated as one endpoint among others, not as the source around which everything else must orbit.

That is a big shift.

The center should not be the tool.

The center should be the knowledge model and the context engineering architecture around it.

Tools should plug into that model, not define its limits.

Closed platforms are going to struggle

I think we are entering a moment where this becomes unavoidable.

The rise of AI is putting pressure on every enterprise system to become easier to ingest, easier to integrate, easier to version, and easier to understand programmatically.

The success of Markdown and documentation as code is not a coincidence. It reflects a deeper demand for simplicity, openness, portability, and composability.

Platforms that cannot:

- expose knowledge cleanly

- integrate through APIs

- support open content formats

- participate in pipelines

- fit into AI-native architectures

will increasingly feel outdated.

Not because they suddenly stopped working.

But because they do not fit the direction the enterprise needs to go.

The question is no longer just: does this platform support my process?

The more important question is: does this platform contribute cleanly to my enterprise context system?

That is a much tougher test.

And I think it is going to become a major selection criterion in the years ahead.

Final thought

I do not think the next enterprise advantage will come only from having access to better models.

And I do not think it will come only from having more data.

I think it will come from being able to engineer context.

To make distributed knowledge explicit.

To version it.

To govern it.

To connect it to business processes.

To relate it to applications and integrations.

To keep its history.

To make it accessible to both humans and machines.

In many ways, the real challenge is not just to document systems. It is to document the enterprise as a set of domains, bounded contexts, value chains, and transverse flows. Because that is where meaning lives. That is where coordination happens. And that is where AI systems will need clarity if they are to become truly useful inside complex organizations.

The real challenge is not just to connect AI to enterprise data.

The real challenge is to build context systems that connect business meaning, operational knowledge, technical reality, and organizational memory.

And if we do that well, I think we will end up with something much more powerful than better documentation.

We will end up with a shared contextual layer for the enterprise. A place where business, engineering, architecture, support, and operations continuously enrich the same living system of knowledge. A place where the frontline feeds the model, where delivery reuses it, where AI can consume it, and where future systems can be built on top of it.

That, to me, is where the next generation of enterprise applications will be built.

And honestly, that is where some of the most interesting engineering work is about to happen.